Three key perspectives on Emotional AI and why you should be aware of them

What if your phone could send you a notification when you were becoming anxious, before you’d even realized it?

Or what if you could scan your toddler’s face and understand that they were nervous?

Emotional AI is a field that questions what it means to have feelings, what it means to emote, and in essence, what it means to be human.

In today’s digital world we are already communicating through screens—whether Zoom calls, Snapchat, or text messages. It’s common for mistakes to happen, for tone to be lost and for context to be missing. And while communication issues can arise in person as well as digitally, emotional AI is the field that allows us to ask: what if it could all be optimised?

What is Emotional Artificial Intelligence?

Sometimes just called Emotion AI, or affective computing, Emotional AI is a subset of AI, the goal of which is to measure, understand, simulate, and react to human emotions.

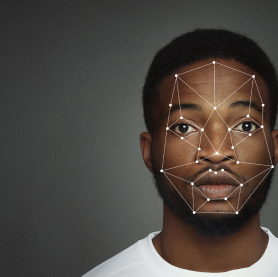

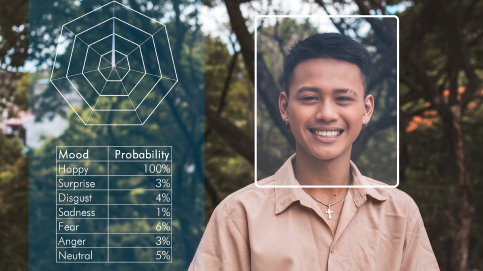

Machines programmed to have this kind of ‘emotional intelligence’ are trained to learn from patterns in cognition as well as emotion. The goal is for the machine having the ability to “detect, interpret, and respond appropriately to both verbal and nonverbal signals”. To provide a machine with accurate information, images, videos, and audio recordings are used as input, along with recognition systems. As more data is input, the machines ‘learn’ to both recognize and interpret subtleties in facial expression and voice intonation.

In some projects, researchers are also working with parameters such as skin temperature and heart rate, which provide additional data points for identifying emotional response.

At the moment, humans still absolutely have the upper hand when it comes to detecting and identifying emotions—but machines are improving quickly. Modern technology has the advantage of being able to process vast amounts of data, with a lot of detail, very quickly. For example, a computer might be able to identify micro expressions in millions of photographs, or listen to thousands of recordings of the human voice with different inflections.

A change in perspective.

For humans, the drive to understand emotion is often part of a daily experience, and studies have shown that socialization and communication are crucial parts of child development. But with Emotional AI, the perspective on how and why we value emotions might change. Researchers have presented solutions and ideas for how Emotional AI might fit into our society—but some of them may have more repercussions than we realize. Here are three uses and perspectives to consider:

- Marketing – Digital marketers and decision makers will know already that emotions play a huge role in the customer journey. From a sales and advertising perspective, understanding how a user might be feeling is the juiciest information out there. The ability to distinguish a frustration, desire, or pain point means marketers can build sales funnels that soothe frustration, satisfy desires, and relieve pain points with their products and services. Emotional AI has the potential to transform the e-commerce space dramatically by identifying micro expressions by using the footage captured by a customer’s smartphone or webcam, and correlating that data with the action the customer takes (like buying the product or sharing a link). And if you’re wondering when this technology is going to be around—look no further. It’s been on the market for the last decade.

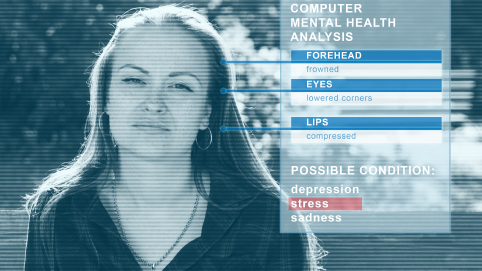

- Assistive Services – A broad category of projects and research, Assistive Services refers to products that can improve a human experience. For Emotional AI, this might mean training exercises for people with neurodivergencies that make it easier for them to identify emotion, or express it themselves. Other technologies can help with healthcare—for example, identifying those who need mental health assistance.

Technologies in this category are also being used in the automotive industry, where interactions based on driver emotions and reactions could increase road safety. By measuring aspects of emotion like blood pressure, voice volume, and microexpressions, smart cars could be built to have reactive controls. Reminding drivers to relax or re-focus could help decrease road rage, keep distracted drivers alert, or remind sleepy drivers to take a break.

-

Ethics – while there are no definitive answers, everyone has at one time questioned what is ethical about AI, and what makes us uncomfortable. As the industry grows, and Emotional AI takes its place in popular technology, philosophers and psychologists are asking questions about privacy, ethical use, and potential impacts. CCTV cameras enable retail stores to record customer reactions to products, prices, etc. in real time and thereby improve their range and pricing, and take the information to their advertising campaigns as well. Being recorded without knowledge or permission is one conversation, but emotional AI also requires us to think about whether or not we are comfortable with companies leveraging the information they have about us. What’s your limit?

While Emotional AI is a constantly-expanding field, bear in mind that technology may only be as good as its programmer. To identify emotions—based on facial expressions, voice inflections, or some kind of incommunicable level of empathy, programmers need to have extremely high emotional intelligence themselves. Self-reported data is used heavily in emotional AI as well, and it’s not always as accurate as the reportees might think.

When we approach technology from the point of view that we can help others, increase empathy, and solve problems, the world opens up to us. Emotional AI is a rich realm of multidisciplinary research with potential for wonderful solutions.

If you’re looking for an out-of-the-box solution, Cloutel just might have it. Ready to explore with us?